Introduction

Note: This is Part 3 of 3 of our tutorial series about Remix Run. In case you missed it, you can read Part 1 and Part 2 here.

As we wrap up this series, we will continue building on our Remix knowledge from Part 1 and Part 2—we will combine Remix and Redis cache and explore some examples you can leverage to use for your own applications.

In this post, we will cover:

- What are caches and what is Redis

- Setting up Redis using Docker

- Using Redis in our Remix app

- Implementing a cache logic using Redis

Remix alone is fast already. However, it's possible to make it even faster by using caching techniques. By implementing the loader function on each page, you can set a cache in pages that don't change often (e.g. the 'About Us' page).

Before we get started, I strongly suggest you get the code from Part 2 since we will implement the cache in this code.

The code is available on my GitHub repository. After cloning the code on your machine, don't forget to execute npm install, followed by npm run dev.

What are caches? What is Redis?

Generally, the cache is a layer in the software architecture that stores ephemeral data, where you can configure an expiration time. After the expiration time, the data is deleted. Redis is a cache service, where you have the option to store the data in memory, making reading and writing operations much faster compared to disk or other types of long-term storage.

In a typical HTTP request, a backend service will use the file system to read data from the storage hardware (e.g. a hard disk or a solid-state drive), apply some logic and transformations, and then return a response. If you do the same operation, but read data from memory, you can improve the response time tenfold. Keep in mind that DRAM has a speed rate of 32GB/s while SSD has a rate of 0.5GB/s; in other words, DRAM is 70x faster than SSD.

Before we continue, let's take a look at an example to illustrate this process. Imagine you have a website where a page requests to load 100 products using a backend service that consumes data from a database, where the response time is 75 milliseconds.

Scenario A: No Cache

Without a cache, every time a user accesses the website, the request will use the database to retrieve data - as we learned, it's an expensive operation. If 10 users access the website at a given time, 10 requests will consume resources from the database.

Credits: Redis

Scenario B: With Cache

For every request, the backend service will check if there is a cache in memory. When there is no cache, the data will be retrieved from the database, stored as a cache in memory and then returned in the response. In other words, from 10 users accessing the website, only the first user will need to wait for data from the database, while the other nine will benefit from data read from memory.

Generally, when talking about cache, there are a couple of specific terms to describe the cache lifecycle, the two main ones are:

Cache hit: When data is served from the cache.

Cache miss: When there is no data in the cache, the system must use the original source of the data to respond back.

Redis setup using Docker

Now, let's set up a Redis instance using Docker. At the end of this section, you will have an instance of Redis Server running on your machine, so you can use it in the code for our project.

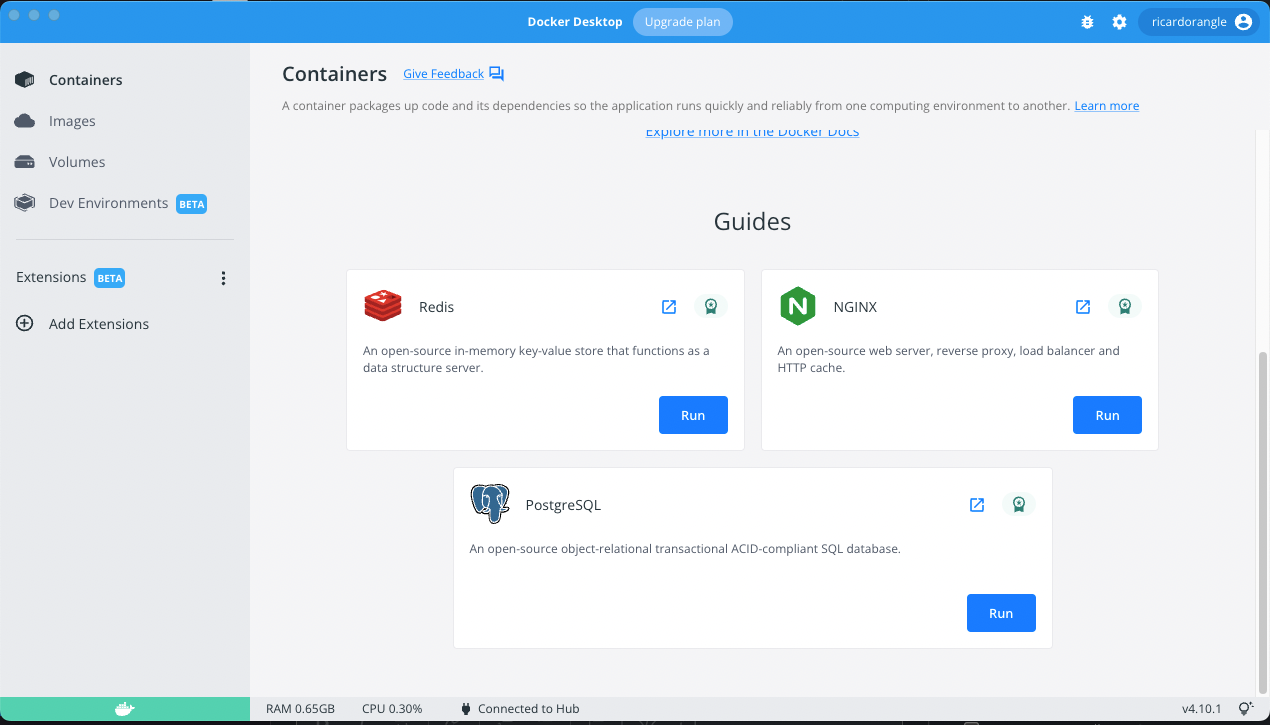

Step 1) Download and install Docker Desktop on your computer.

Step 2) As soon as Docker is running on your computer, click "Containers" and then select "RUN" Redis.

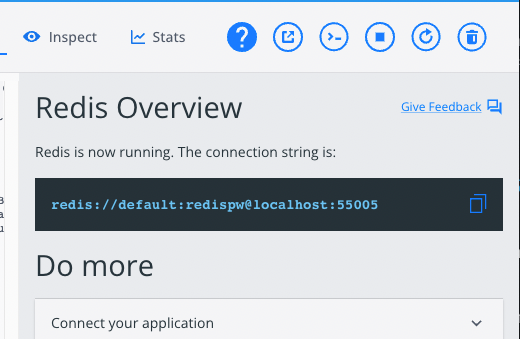

Docker is going to create a Container showing REDIS configuration, such as the connection string.

Note the connection string above follows this pattern:

redis://<username>:<password>@<hostname>:<port>

As per the screenshot above, then:

Where:

Username is default

Password is redispw

Hostname is localhost

Port is 55005

You will need this information later on in this post when configuring the Redis client on our code.

Using Redis in our Remix App

In this section, our goals are to:

- Add a timer on our home page to measure the response time, and then compare the results with and without cache

- Configure a Redis client in our code.

- Implement logic to cache data, with an expiration policy of 30 seconds.

We will use ioredis, a robust, performance-focused and full-featured Redis client for Node.js.

Using your terminal of choice, make sure you're at the project root folder - where you cloned the repository. Execute the install command:

npm i ioredisNext, create a new folder utils inside ./app/shared/, then create a new file named redis.tsx inside the utils folder.

Using the following code, make sure your change port, host, username, and password match the values provided by Docker when you ran the Redis container.

//app/shared/utils/redis.tsx

import Redis from "ioredis";

const redisClient = new Redis({

port: 55004,

host: "localhost",

username: "default",

password: "redispw",

});

export default redisClient;ℹ️ Note: This configuration is only an example of how to run Redis on your local machine. Deploying it in a production environment will require a Redis server or cloud solution like AWS MemoryDB for Redis. We will not cover this setup in this post.

Adding a timer to our home page

Open the file app/routes/index.tsx (a.k.a. our home page).

As part of the Remix function loader, we will measure the response time by capturing the current timestamp t0 just before making the request to the backend. After the response, we will capture the current timestamp as t1 so that our response time is t1 - t0 milliseconds. We will output the response time as part of the cover. See below:

//app/routes/index.tsx

export const loader: LoaderFunction = async () => {

// Time Before Loading

const t0 = new Date().getTime();

// Loading the Data from our Prisma

const data = await db.toy.findMany({

include: {

images: true,

},

});

// Time After Loading

const t1 = new Date().getTime();

// Response Time Result

const responseTime = `${t1 - t0}ms`;

// Returning the data to our Template

return json({ toys: data, responseTime: responseTime });

};

...

...

export default function Index() {

const data = useLoaderData();

return (

<>

{/* We are going to add the result in our Cover Title */}

<Cover

title={`Star Wars Toys - Loading Time: ${data.responseTime}`}

image={

"https://images.unsplash.com/photo-1608983765214-3fb32be57d29?ixlib=rb-1.2.1&ixid=MnwxMjA3fDB8MHxwaG90by1wYWdlfHx8fGVufDB8fHx8&auto=format&fit=crop&w=2069&q=80"

}

/>

<StyledHomeProductContainer>

<ProductGrid toys={data.toys} />

</StyledHomeProductContainer>

</>

);

}After saving the changes in our code, refresh the page a few times just to check the response time result (see below):

As you can see, on my machine, the response time is between 4ms to 11ms with no cache.

Implement a Cache Logic Using Redis.

Now, we will extend the loader function to include the necessary logic to check, use, and create the cache upon requests. The final version of the code should look like this:

//app/routes/index.tsx

export const loader: LoaderFunction = async () => {

// Step 1 - Define a key to store the cache

const cacheKey = "allToys";

let dataRecords = [];

const t0 = new Date().getTime();

// Step 2 - Retrieve the cache using the key for all toys

const toysCache = await redisClient.get(cacheKey);

// Step 3 - Check if we have a Cache Hit

if (toysCache) {

// Step 3.A - Use data from cache

dataRecords = JSON.parse(toysCache);

} else {

// Step 3.B.1 - Use data from the Database

dataRecords = await db.toy.findMany({

include: {

images: true,

},

});

// Step 3.B.2 - Set Cache for 30 seconds

redisClient.set(cacheKey, JSON.stringify(dataRecords), "EX", 30);

}

const t1 = new Date().getTime();

const responseTime = `${t1 - t0}ms`;

// Step 4 - Return the data

return json({ toys: dataRecords, responseTime: responseTime });

};Note the steps as comments in the code, which I've added to make it easier to explain what's happening.

Step 1

Redis stores data using the key/value dictionary. In other words, the key defines a unique identifier for the value you are storing. Using the key, you can get the content. When you set the value for a key, if the key already has a value, the value will be overwritten.

For simplicity, we will use allToys as our key. A short key name is not recommended for real scenarios. A good naming convention is very important for a proper cache strategy. It's a complex topic, which we won't explore in this post. For more information, read the Redis documentation. A good place to start is the documentation about keys that can be found here: redis.io

Step 2

We ask Redis to get the content associated with our allToys key. If there is no content associated with our key, the command will return as undefined.

Step 3

We check the value obtained in the previous step; if we have truthy value, we have a Cache Hit (Step 3.A), so we can use the cached data in our response later. Note that we store the value for our key as a string. Hence, we parse it back to JSON. Redis supports different data types. For more details, we recommend reading up on the official documentation here: redis.io

In case we have a falsy value (Step 3.B.1), we will use Prisma to retrieve the data from the database. Right after (Step 3.B.2), we will create the cache with an expiration after 30 seconds. Note that we are creating the cache using a JSON string as the value. Also, note that the EX is an option parameter to instruct Redis to expire the value for our key after N seconds of its creation. For other SET options, check the documentation here: redis.io

Step 4

Returns a JSON response containing the toys and the response time taken to generate the results.

Save the code and refresh the page a couple of times to see what happens.

As you can see, on my machine, we now have a response time of 0 milliseconds. Of course, in a real scenario, with requests happening through the internet, achieving 0 milliseconds is impossible.

Conclusion

Remix is an extraordinary framework. It is light, fast, and really easy to use.

In this post, we were able to set a simple example of usage of Cache with Remix.

Using Remix, you are able to set your own logic of cache for each page that you have in your application. And it is extremely cool and useful.

Mixing Remix with other technologies can leverage your app even more. We were able to prove it when we load the home page in 0ms (No Loading Spinners were required 🙂).

If you need the final code of this post, the link is below:

GitHub - ricardohsilva/remix-tutorial-1 at part-3

Hope you have enjoyed this Remix Series.